From the beginning of 2022, it is known that the main trend in the technology industry was “artificial intelligence”. While the concept isn’t new — AI is the term many believe has been used to describe the way computers work since at least the 1980s — it has once again captured the imagination of the investing public.

When we talk about AI, it’s important to understand what we mean. Artificial intelligence can be divided into seven broad categories – most of which are in development and don’t exist yet.

The type of artificial intelligence that everyone is interested in falls into the so-called memory-limited AI category. This is where the big language models come into play (LLM).

They are based on deep learning techniques and trained on huge datasets, typically containing billions of words from different sources such as websites, books and articles.

This extensive training enables LLMs to understand the nuances of language, grammar, context, and even some aspects of general knowledge.

Some popular LLMs, such as OpenAI’s GPT-3, use a type of neural network called a transformer, which allows them to handle complex language tasks with remarkable proficiency. These models can perform a wide range of tasks, including:

- To answer questions

- To summarize a text

- Language translation

- Content creation

- Even engaging in interactive conversations with users.

The de facto restrictions and lack of applications

There are huge limitations in the AI industry space. It works great if you ask for something simple and predictable, but falls apart when you ask for something slightly more complex, like trying to create imagery to give you the image you want from a simple four-sentence paragraph.

There is, the industry admits, a lot of work to be done before progress can be made

The problem? The whole AI experiment is extremely expensive, and costs are accelerating far beyond advances in its usefulness and ability to engage consumers to generate revenue.

OpenAI – the current leader in LLMs languages - is expected to lose $5 billion this year, which represents half of its total investment.

The losses expand as the more customers the company signs up and the better its model becomes. There is a huge lack of economically viable applications for which this technology can be used. Attempts to apply this technology in meaningful ways have failed miserably.

- Air Canada’s AI Helped Customer Service and Discounted Airfare But the Economy Model Collapsed! The Canadian court said the company is liable for anything an AI assistant provides to a customer.

- Legal acts have banned — piecemeal — the use of artificial intelligence in court cases across the United States following a series of high-profile cases involving the construction of legal texts by artificial intelligence programs.

- Big demonstrations were later revealed to be completely fake as big fake was used.

- Google’s new AI service summary at the top of the search page takes about 10 times more energy to produce than the search itself and has almost zero utility as far as the end user is concerned.

- Revenue in the artificial intelligence space is almost exclusively concentrated in hardware, with little possibility of collection by the end user.

- There are also the huge energy requirements needed to make it all work.

- To make matters worse, further development will likely become more expensive, not cheaper.

Difficulties with processors

The hardware industry is critical to its growth. Processor designers exhausted their ability to increase computing speed almost two decades ago, while Thread computing performance peaked as early as 2015.

Processor design mostly results in increasing the number of “cores” through shrinking the volume. Although that particular mode is expected to be exhausted next year when the 2nm process comes online.

Terms like “3nm” and “2nm” processes refer to the specific architecture and design rules TSMC uses for a family of semiconductors.

Reductions in node size correspond to smaller processor sizes, so more processors can fit on one processor, leading to increased speed and more efficient power consumption. This means that, starting next year, AI can’t rely on efficiency gains from hardware to close the cost gap since we’re already close to the maximum theoretical limit without fundamentally redesigning how processors work.

New customers demand new capacity, so every time another business signs up, costs rise, making it doubtful that there will ever be a tipping point in purchasing the volume of capacity required.

- With this data, a prudent businessman would cut his losses in the AI space.

- The skyrocketing cost, along with the questionable utility, of the technology makes it look like a big money-losing business.

However, investment in artificial intelligence has only grown.

What’s going on?

The easy money

What we are seeing is a major impact of the long era of easy money, which, despite the Fed’s official rate hikes, is still ongoing.

The technology industry in particular has been a major beneficiary of the “easy money” phenomenon. Easy money has been around for so long that entire industries, especially technology, are built and designed around it.

This is how food delivery apps, which have never turned a profit and are on track to lose $20 billion by 2024 alone, continue to operate.

The tech industry will shell out billions to invest in questionable business plans just because it’s promoting software development.

We are seeing many of the same patterns in the AI boom as we saw years ago with the WeWork fiasco.

- Both try to deal with practical problems. Neither changes the data on their customer base.

- Both, despite being formally capital-based, are highly subject to variable operating costs that cannot easily be driven to economies of scale.

- Both apply an extra layer of spending to do something more than they did before.

Despite this, companies like Google and Microsoft are willing to pour huge resources into the Project. The main reason is because, for them, the resources are relatively insignificant.

Big tech companies – loaded with decades of cheap money – have enough cash on hand to buy the entire global AI industry outright.

A $5 billion loss isn’t particularly embarrassing for a company like Microsoft. The fear of losing technological primaries is greater than the cost of a few dollars in a technological war.

OpenAI and convertible notes

Open AI’s new funding round is expected to come in the form of convertible notes, while its $150 billion valuation will depend on whether the ChatGPT maker can transform its corporate structure and remove the profit cap for investors.

Convertible notes are a form of loan, (not being a loan in the traditional sense), granted to a startup, without usually requiring a direct definition of the valuation, i.e. the estimate of the value of the company, at the time it is signed.

The essence of the convertible note (as there are many variations) is that its repayment takes place as follows: the investor can get his investment back with interest or his investment can be converted into shares of the company (convertible = convert into shares).

The previously undisclosed details of the $6.5 billion financing show how far OpenAI, the world’s most valuable artificial intelligence startup, has come from a research-based nonprofit and structural change that is willing to do to attract more and more investment to fund the costly pursuit of artificial general intelligence (AGI), or AI that surpasses human intelligence.

The large funding round saw strong demand from investors and could be finalized in the next two weeks given OpenAI’s rapid revenue growth.

Existing investors such as Thrive Capital, Khosla Ventures, as well as Microsoft are expected to participate. New investors, including Nvidia and Apple, also plan to invest. Sequoia Capital is also in talks to return as a returning investor.

If the restructuring is unsuccessful, OpenAI will have to renegotiate its valuation with investors to whom their shares will be converted, likely in smaller numbers.

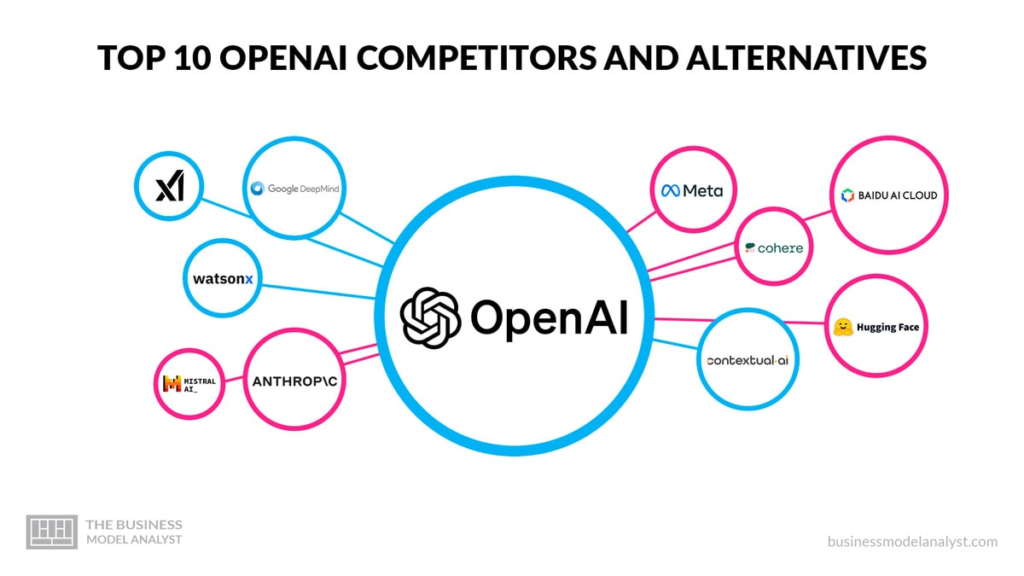

Removing the profit cap would require approval from OpenAI’s nonprofit board, which consists of CEO Sam Altman, entrepreneur Bret Taylor and seven other members. The company has also been in discussions with lawyers about converting its non-profit structure to a for-profit one, similar to those used by competitors such as Anthropic and xAI.

It is unclear whether such fundamental corporate structural changes could occur. Removing the profit cap, which put a cap on potential returns for investors in OpenAI’s for-profit subsidiary, would give early investors an even bigger win. It could also raise questions about OpenAI’s governance and departure from its non-profit mission.

Founded in 2015 as a non-profit research project with the goal of building artificial intelligence for the benefit of humanity, the San Francisco-based AI lab is currently controlled by a non-profit parent organization.

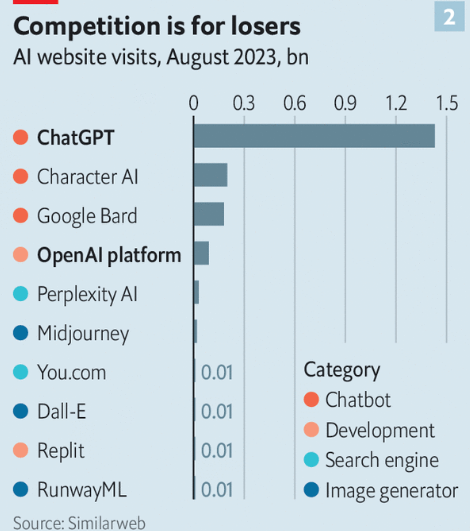

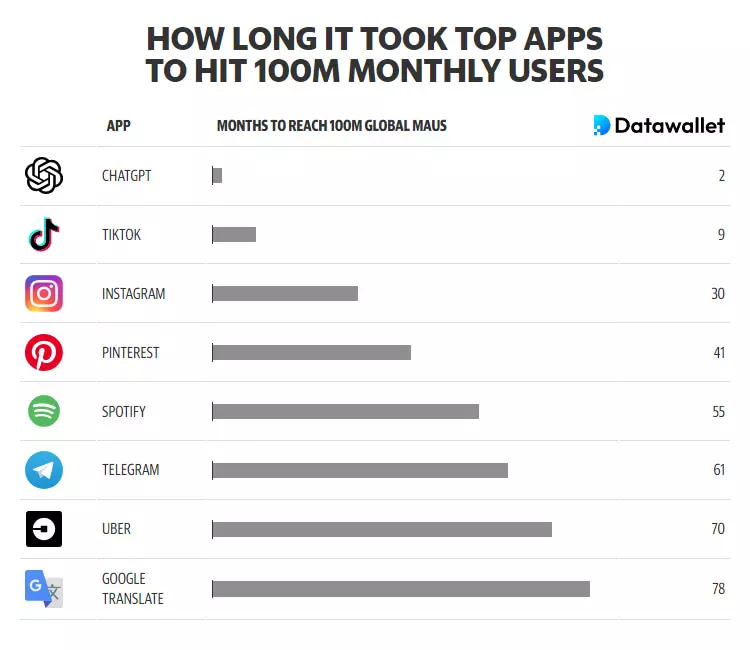

It has accelerated its commercialization efforts by selling subscription-based services like ChatGPT to consumers and businesses, which now boast over 200 million users. Existing investors are subject to a limited return on their investment, with any additional returns directed to the nonprofit.

Along with every little thing which appears to be building inside this area, your perspectives tend to be fairly refreshing. On the other hand, I beg your pardon, but I do not subscribe to your whole suggestion, all be it refreshing none the less. It would seem to everyone that your comments are not entirely rationalized and in actuality you are generally your self not thoroughly certain of the assertion. In any event I did appreciate examining it.