On November 30, 2022, an unknown – to many – until then company, OpenAI opened for public use, an artificial intelligence model, ChatGPT. This fact came to stir the – technological – waters as the horizons opened up by the use of the platform for legal (and not so legal) purposes were countless. The story of OpenAI itself is a typical story of good intentions, which are ultimately not borne out. The text that follows is the product of monitoring developments since the beginning of the year.

As its name (Open) indicates, OpenAI started as a non-profit research laboratory on artificial intelligence (with Elon Musk among the – original – founders). Today, it consists of 2 parts, OpenAI Inc, which remains a non-profit organization, and OpenAI Limited Partnership, which is purely corporate. In the second, the main investor is Microsoft, which seems to have found – finally – a way to counter Google’s primacy on the internet/www.

In recent years there has been an upsurge in computing and other applications based on Artificial Intelligence – TN (Artificial Intelligence – AI). Such applications include search engines (e.g. Google), suggestion systems (e.g. Netflix), speech recognition (e.g. Siri and Alexa), strategy games (e.g. Go), but also more specialized applications , such as identification of potential targets on unmanned platforms of all types, new forms of detection/acquisition, etc.

The medium-term goals of AI are causality, knowledge reproduction, organization, learning, natural language processing (NLP). One of the main long-term goals is the creation of Artificial General Intelligence, a form of AI that will simulate – in an absolute way – the corresponding human one.

Research into AI is nothing new. In fact, the first indications that digital computers can simulate formal reasoning refer to the famous Church-Turing Thesis. As early as the early 1950s, various researchers began to deal with TN and the emerging challenges. However, during the mid-1970s and in response to intense criticism, but also limited results, support for related research programs was dramatically reduced or ceased to exist. This period is known as AI Winter. AI re-emerged strongly at the end of the 20th and at the beginning of the 21st century, with two – essentially – research papers, one from Google, and one from OpenAI itself.

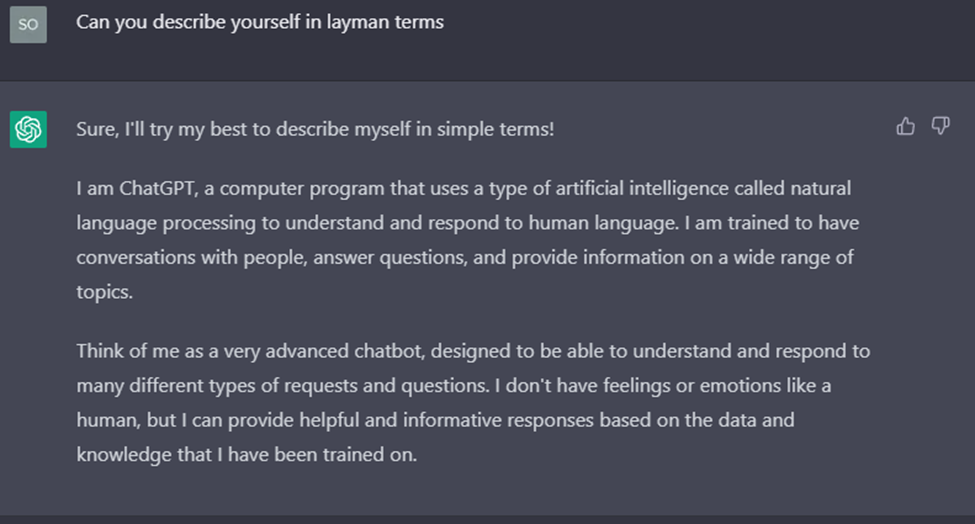

But what is ChatGPT? Let’s ask the same!

ChatGPT is essentially an interface for the corresponding Large Language Model (LLM) of the same company, GPT (Generative Pre-trained Transformer) which is now in version 4 (March 14, 2023). An LLM is nothing more than a huge amount of “trained” text data. Of course, it’s actually not that simple, and there are several (and quite complex) methods of “learning” these models, such as supervised (where there is a dipole of labeled input/output data), reinforcement (where the system learns through interactions and of rewards with its environment), etc.

For example, the original GPT used a part (7000 books with a total size of 4.5 GB) from a dataset known as BookCorpus which includes about 11,000 unpublished books scattered across the internet. For comparison, the GPT3 model used 570 GB of text!

LLMs are used to process Natural Language Processing (NLP) systems, which in turn enable human-machine interaction. Chances are most of us have used one (or some) of these systems, such as digital assistants on websites, also known as chatbots. ChatGPT, of course, takes the relevant technology several steps further.

For its “training” ChatGPT was based on an LLM which is however optimized through reinforced and supervised learning, where in both there was the use of humans as trainers (which is a prerequisite for supervised but not for reinforcement learning). This allowed ChatGPT to be able to give the illusion of chatting with a real person.

ChatGPT can be used in a wide range of applications, such as a more flexible and natural digital assistant, constructive discussion, creating personalized communication (for example reply emails, etc.), creating content for social networks, with a special emphasis on product promotion, etc. However, this particular technology seems to have a gray side.

For example, there are reports of encouraging users to commit suicide or dangerous practices (eg plug the pen in!). But one of the most serious ramifications is that already malicious users are using ChatGPT both to create phishing emails, which look EXTREMELY plausible, as they use correct syntax and language expressions, and to create malware, even without any programming knowledge !

So is ChatGPT a real threat? Unfortunately this is a question that does not have an easy answer. Indeed, as early as the end of December 2022, malicious users begin to actively “experiment” on how to take full advantage of the new technology. Also, schools and universities are starting to see the emerging challenges (ChatGPT can – potentially – write a full thesis).

On the other hand, it can be used to the advantage of defenders. For example it can check code for security holes, or support the creation of protection tools. A limiting factor is cost (one of the reasons behind converting from a non-profit organization to a regular corporation). The latter is not at all negligible.

The technical and technological infrastructure required to “train” models with billions of parameters requires arrays of suitable servers (e.g. the DGX A100 multi-GPU server at a cost of $200,000), while the energy consumption, both for the operation of the servers and and for the proper cooling of datacenters it reaches astronomical heights. It is not an exaggeration if the specific costs are estimated in the hundreds of millions of dollars (which makes mining infrastructure look like a game!)

In practice, it all boils down to the same question. How much morality “fits” or should fit into technological development? Shortly before World War II broke out, various scientists had not only created the theoretical background, but had also proposed (in any way) the creation of a weapon that would exploit nuclear energy. The weapon was eventually made and used twice, before bringing a balance of terror and humanity to the brink of (self)destruction.

In 1996, Dolly, a Dorsey sheep, was introduced to the public and was the first mammal to be cloned from an adult somatic cell. Although highly innovative, the method was met with multiple backlashes, and although experiments continued (especially in China) the related technology did not advance, at least not as far as it could.

In closing, we should emphasize the following, technology is “born” neutral, its use by humans is what determines the side – good or bad – it will take. What is certain is that our world is changing drastically.